How CodeLogician works

AI coding agents normally reason statistically. CodeLogician makes them build a mathematical mental model and analyze it.

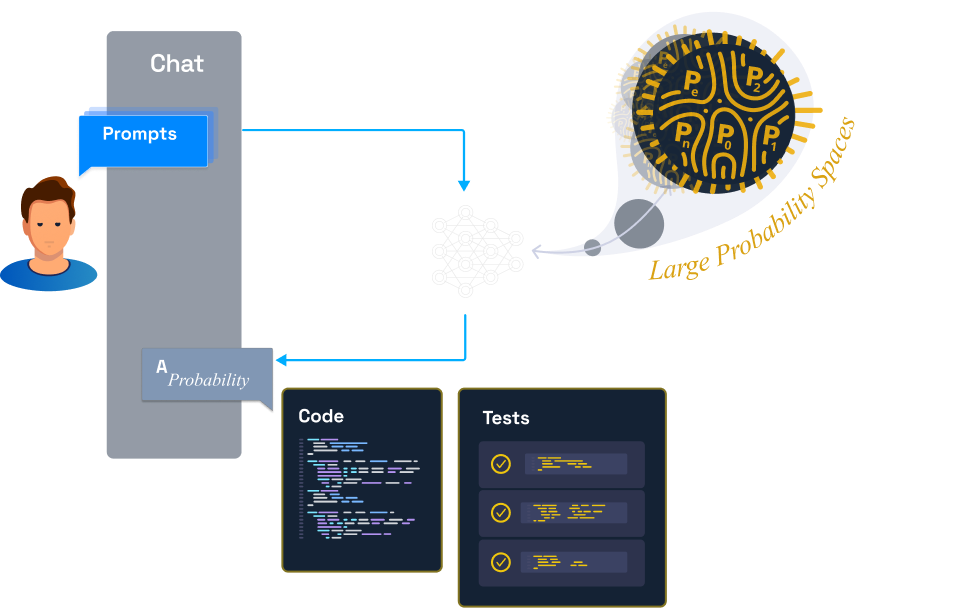

LLM Agent only

- Samples likely paths

- Edge cases are easy to miss

- Humans must manually validate behavior

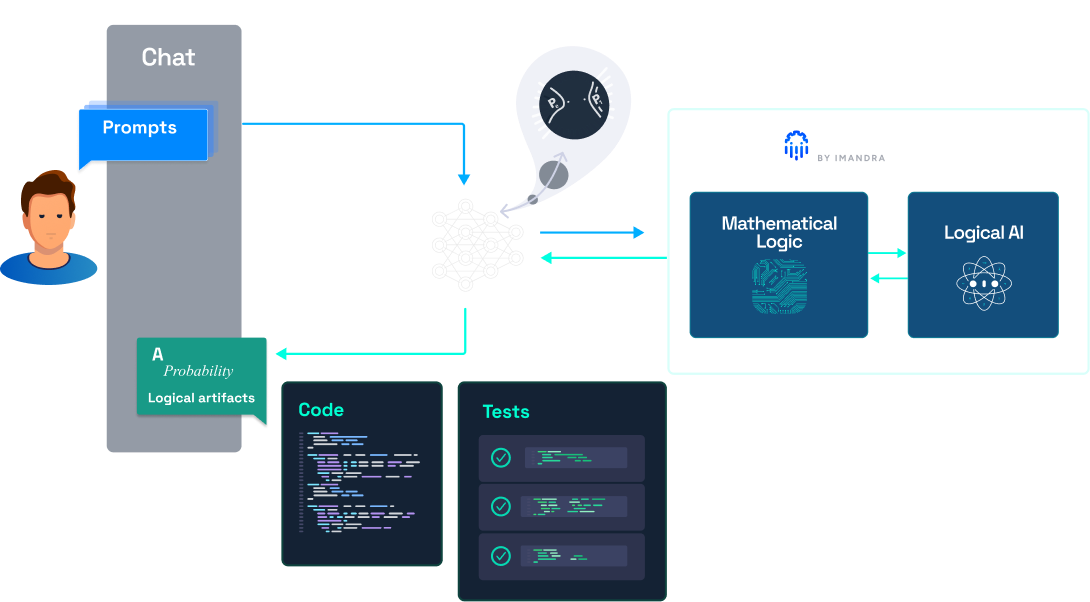

LLM Agent + CodeLogician

- Decision logic is formalized

- Reasoning engine explores behavior

- Artifacts expose boundaries and edge cases

CodeLogician turns AI coding from “looks right” into “behavior understood.”

Tackle Complexity with Math and Logic

CodeLogician activates when code starts encoding rules, states, or business logic — forcing the agent to translate its understanding into structured logic and using a reasoning engine to surface edge cases, decision boundaries, and invariants that LLMs alone struggle to uncover.

Not all code needs deep reasoning. But systems that encode decisions — payments, access control, workflows, distributed services — create behavioral complexity that statistical AI alone can't fully explore.

LLMs Alone Are Great For

- UI rendering and formatting

- CRUD services and wrappers

- Data transformations

- Simple scripts

CodeLogician Activates For

- State machines and workflows

- Financial and rule engines

- Access control and policy systems

- Distributed system coordination

- Complex integration logic

Read the full Tackle Complexity with Math and Logic guide here, or the Managing Complexity with Math and Logic article on Medium.

CodeLogician Performance Metrics

Comprehensive evaluation of LLM performance across multiple models and metrics

Model rankings

Benchmark data and analysis in the code-logic-bench repository.

Neurosymbolic AI for Code

CodeLogician™ is powered by ImandraX, our award-winning automated reasoning engine that combines formal verification, symbolic reasoning, and rule synthesis to provide mathematical guarantees about your code's behavior.

Real-world industrial applications in safety critical industries

ImandraX is widely used in safety-critical industries such as financial markets, robotics and autonomy, and model-based systems engineering in government and defense.

Formal verification

Mathematical verification of algorithm properties with powerful reasoning automation powered by ImandraX -- bringing formal proofs and counterexamples seamlessly into the software engineering workflow.

Region decomposition

Inspired by Cylindrical Algebraic Decomposition, this technique decomposes algorithm state-spaces into regions where system behavior is invariant, effectively computing a map of all possible system behaviors.

Seamless integration of bounded and unbounded verification

Seamlessly combine bounded model-checking and unbounded inductive proof through ImandraX. Every goal can be subjected to both bounded verification (complete for counterexamples in a precise sense) as well as unbounded verification, e.g., using Imandra's decision procedures and higher-order lifting of Boyer-Moore style automated induction.

Counter-examples

ImandraX is complete for counterexamples in a precise sense. Automatically synthesizes deep counterexamples to incorrect verification conjectures, even with recursive functions and nonlinear arithmetic, aiding discovery and eliminating time sinks from trying to prove false goals.

Imandra Discover

Discover program properties and lemmas, extract logical patterns from data (e.g., logs) and synthesize them into executable and auditable models. Identify anomalies in out-of-sample data and generate corrective actions for detected issues.

Learn more about the reasoning engine at imandrax.dev.

What developers use it for

From day-to-day code review to high-stakes system verification.

Refactor safely

Prove your refactored function is behaviorally identical to the original — or see precisely where behavior changes.

Catch bugs before production

Give CodeLogician the function and it exhaustively maps all inputs that cause unexpected outputs — before a single line ships.

Equivalence checking

Compare two implementations for semantic equivalence — useful for migrations, API versioning, and shadow deployments.

Prove invariants

Establish formal guarantees that hold across all inputs — account balances, state machine transitions, security properties.

Built for systems that can't be wrong

Payment flows

Charge logic, refunds, idempotency

Pricing logic

Discounts, tiers, edge conditions

Auth & sessions

Token expiry, permission boundaries

Matching engines

Order books, fairness, settlement

Agent plans

Agentic loop invariants, tool safety

Explore demos and examples in the repo.

Pricing

Available plans

Select a plan that scales with your automated reasoning needs, from building AI agents with reasoning skills to deploying enterprise-grade formal verification.

Imandra Universe is the ecosystem through which ImandraX, CodeLogician, and other reasoning tools are delivered.

Builder

Most PopularBuilt for individual builders who need predictable usage.

Pro

For advanced users running heavier reasoning workloads.

Team

Collaboration and scale for teams shipping reasoning systems.

In the press and on paper

Peer-reviewed research, press coverage, and video walkthroughs of CodeLogician and the reasoning engine behind it.

Managing complexity with math and logic: changing Stripe payment flow with Claude and CodeLogician

How to use CodeLogician with Claude to refactor and verify a Stripe payment flow — managing complexity with formal logic and automated reasoning instead of guesswork.

Formal AI Reasoning with ImandraX & CodeLogician | Session 1 of AI Reasoning Weekly

Grant Passmore and Denis Ignatovich introduce ImandraX, a theorem prover and proof assistant built for engineers, and CodeLogician, which plugs formal reasoning directly into AI coding agents like Claude Code.

Vibe Coding was phase 1. Logic-first AI is phase 2. It is here now.

How CodeLogician makes AI coding agents reason explicitly instead of guessing — the shift from probabilistic generation to structured, verifiable logic.

How Formal Reasoning Extends LLM Capabilities for Software Analysis

We introduce a benchmark targeting mathematical reasoning about real software logic. Across all tested models, augmenting LLMs with formal reasoning via CodeLogician closes a 41–47 percentage point accuracy gap compared to LLM-only reasoning.

A Gentle Intro to ImandraX and CodeLogician

Denis Ignatovich (Co-Founder, Imandra) walks through the core ideas behind ImandraX and CodeLogician — how automated reasoning integrates with AI coding agents.

Imandra Unveils Imandra Universe: The Platform for Neurosymbolic AI Agents

Imandra launches Imandra Universe — a unified platform bringing neurosymbolic AI and logical reasoning to software development teams at scale.

For more research, press, and videos, see the media page.